The Causal Effect of Survey Mode on Students’ Evaluations of Teaching

Empirical Evidence from Three Field Experiments

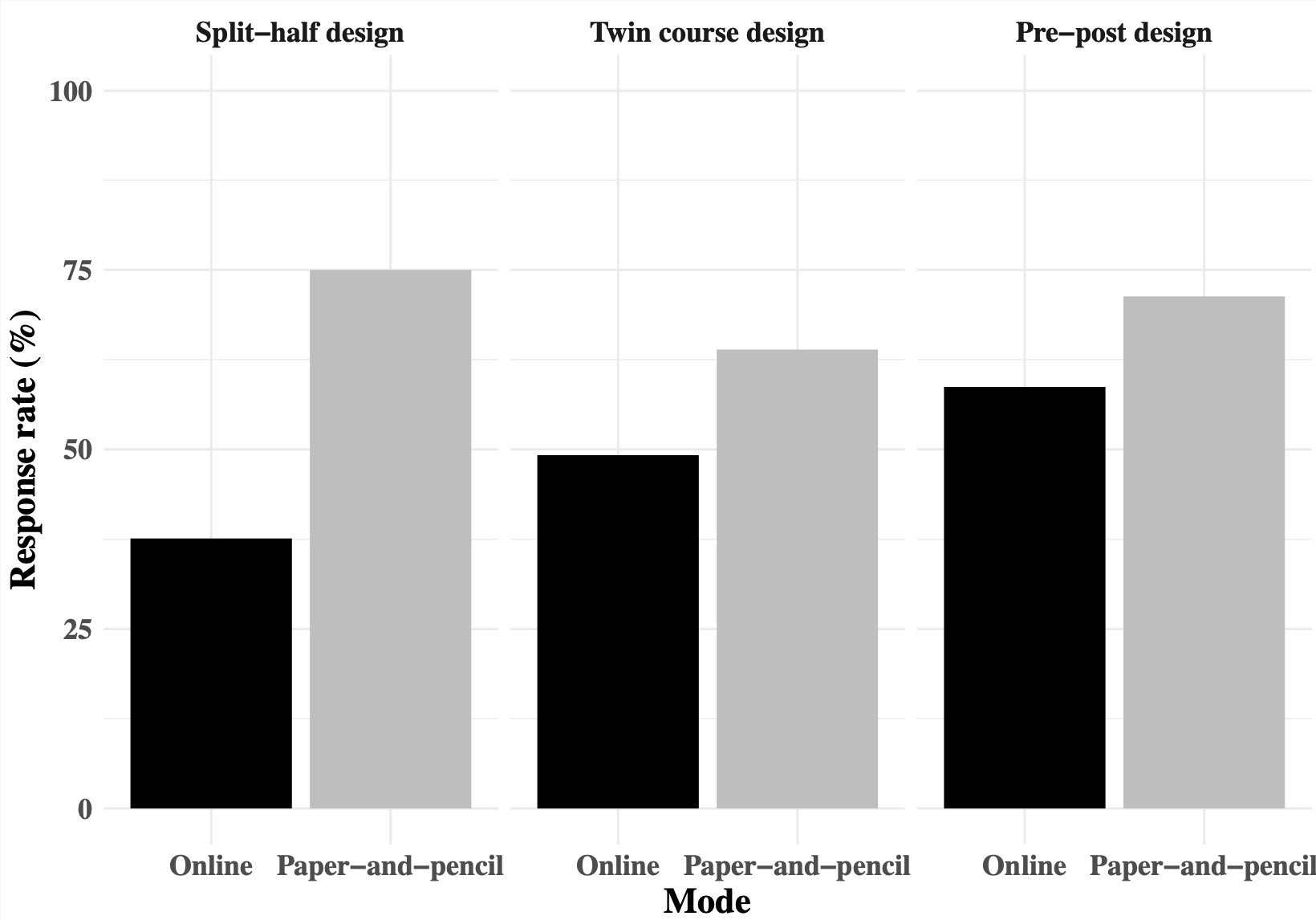

In recent years many universities switched from paper- to online-based student evaluation of teaching (SET) without knowing the consequences for data quality. Based on a series of three consecutive field experiments — a split-half design, twin courses, and pre–post-measurements—this paper examines the effects of survey mode on SET. First, all three studies reveal marked differences in non-response between online- and paper-based SET and systematic, but small differences in the overall course ratings.

On average, online SET reveal a slightly less optimistic picture of teaching quality in students’ perception. Similarly, a web survey mode does not impair the reliability of student ratings. Second, we highlight the importance of taking selection and class absenteeism into account when studying survey mode effects and also show that it is necessary and informative to survey the subgroup of no-shows when evaluating teaching. Third, we empirically demonstrate the need to account for contextual setting of the survey (in class vs. after class) and the specific type of the online survey mode (TAN vs. email). Previous research either confounded contextual setting with variation in survey mode or generalized results for a specific online mode to web surveys in general. Our findings suggest that higher response rates in email surveys can be achieved if students are given the opportunity and time to evaluate directly in class.

Cite this article:

Treischl, E. & Wolbring, T. (2017): The Causal Effect of Survey Mode on Students’ Evaluations of Teaching: Empirical Evidence from Three Field Experiments. Res High Educ 58, 904–921. https://doi.org/10.1007/s11162-017-9452-4

- Posted on:

- September 3, 2017

- Length:

- 2 minute read, 250 words

- See Also: